It’s been exciting building out the OpenOps framework for producing AI-accelerated workflows.

Now that the base platform is developed, we want to start training up our internal solution architect community (as well as other staff) to develop demos for the different scenarios, and eventually train up partners and internal IT teams to do the same.

Over the next few weeks, our hope is to support solution architects in standing up demo instances of Mattermost that can do a few things:

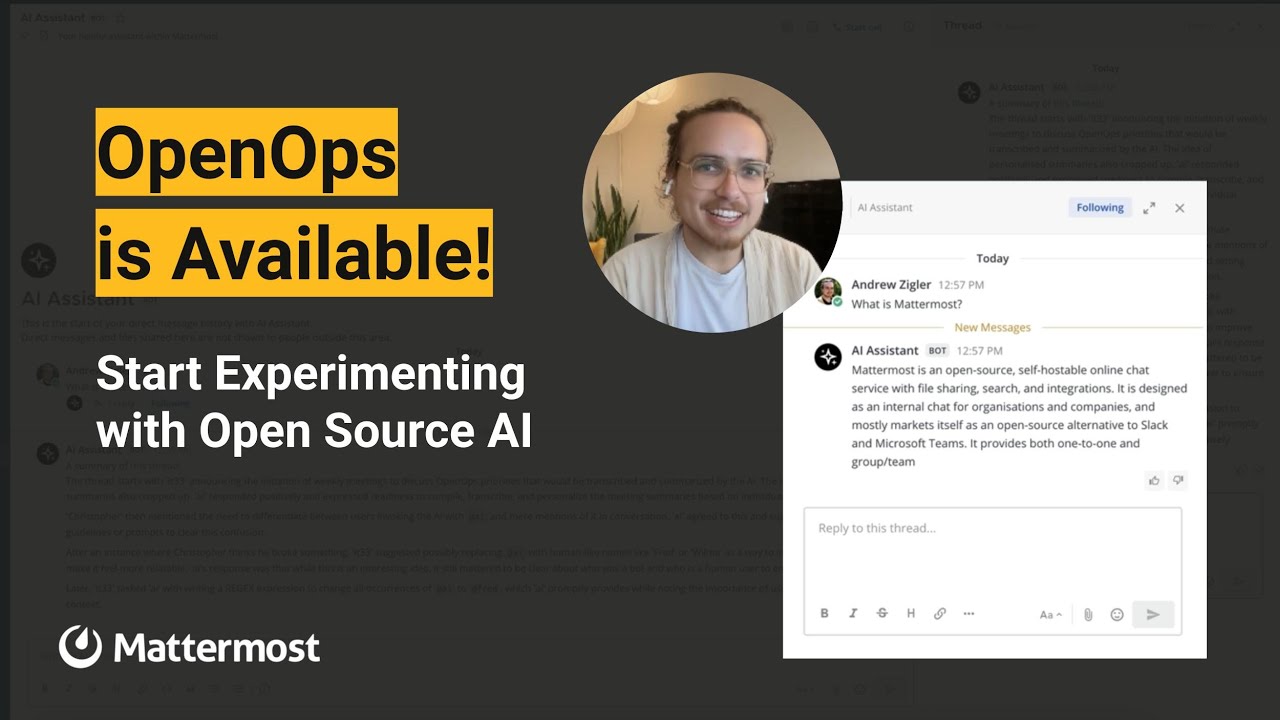

1 - Deploy the OpenOps framework to enable AI bot interactions inside a live Mattermost system

2 - Connect different demo systems to each other using the Mattermost Share Channels feature. The intention here is to add geometric value to the demo systems. One system might be showing a security operation scenario, another might show a logistics scenario, another could show a Dev/Sec/Ops scenario–and with Shared Channels any solution architect can show all the difference scenarios without having to log into different servers.

3 - Create and publicly share some demo recordings of early use cases for the OpenOps and Shared Channels features.

We’re going to start by inviting our solution architects to the regular OpenOps check-in meetings to help them get started, answer questions and help them connect to the product and engineering experts internally to help them get the most out of every feature area.

Side note: You might be wondering why we’re posting internal initiatives on our public forum. We’re using a Default Web Visible approach to non-confidential data in order to better assess the ability of web-enabled LLMs to be helpful in internal workflows.